Neuroevolutionary Wordle - Neural Net Design

Designing the Neural Net for the Wordle player is the next task in the Neuroevolutionary Wordle series of posts.

There are broadly three sections:

- Input encoder

- Hidden layers and output head

- Output embedding

Explanatory Diagram

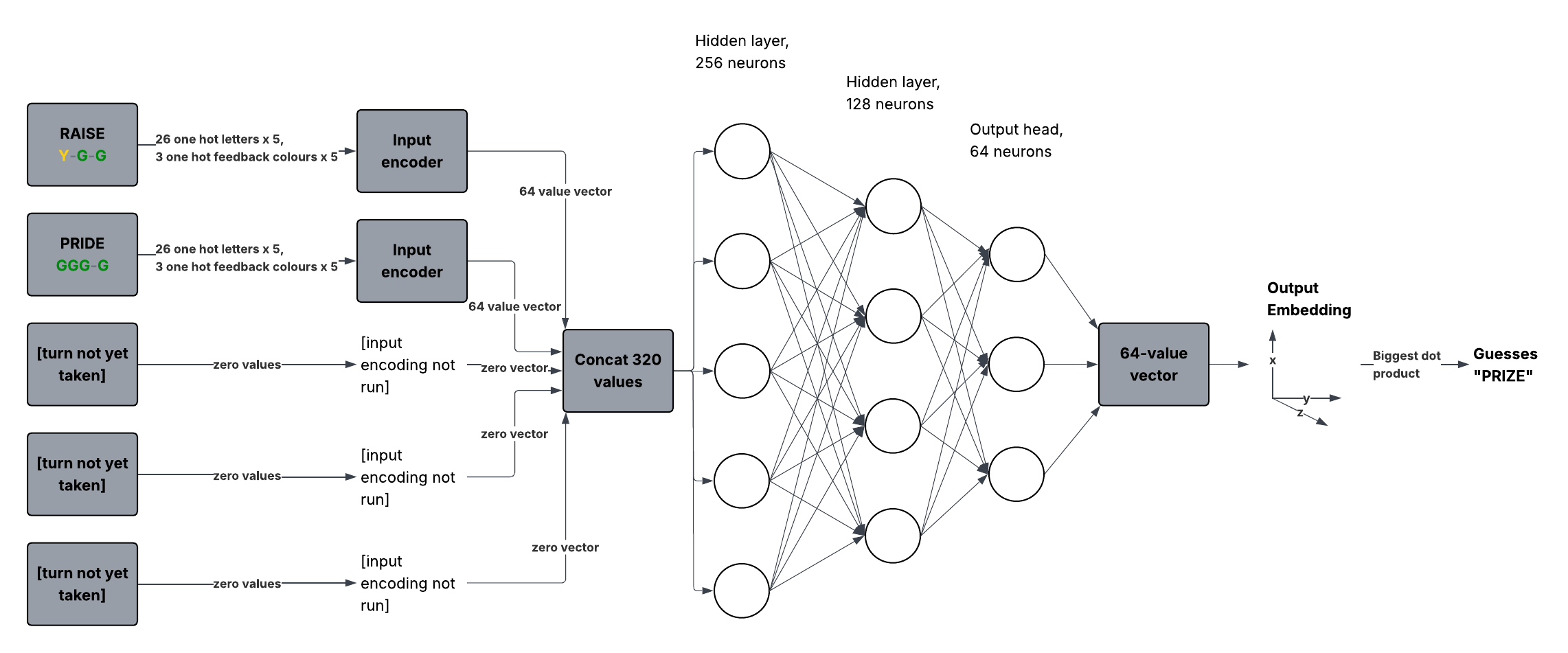

This diagram shows the overall shape of the policy:

Input Encoding

High level game concept

The high-level concept is that, when a Wordle game is in progress, there are between 0-5 previous turns in the game. Each letter in these previous turns is green, yellow or grey, depending on whether that letter appears in the correct solution, and where.

What an input encoder does

An input encoder converts a guess into a vector. It receives 5 letters and 5 colours, and returns a 64-value vector.

There can be up to 5 previous guesses in an in-progress game of Wordle, so the shared encoder is used up to 5 times. If a guess hasn’t been taken yet, the model uses \( (0,...,0) \), a hard-coded zero vector, as if it were the outcome of an input encoder.

The input encoders convert a ’turn’ in a Wordle game to a 64-value vector. What goes into an input encoder is 5 letters, each of which is a one-hot array, and 5 tile colour feedback items, each of which is a one-hot array.

For example, if the first character of a guess in the Wordle game is A, the one-hot array looks like \( (1,0,0,...,0) \). Of the 26 letters, only the first is a one, because the character is an A.

Regarding the ‘Green/Yellow/Grey’ feedback, each character in the guess is given the appropriate colour by another one hot array, which might look like \( (1,0,0) \) if the letter tile is green in the Wordle game, for example.

There are five such characters in a guess on a Wordle grid, so what goes into an input encoder is \( 29 \times 5 = 145 \) values.

What comes out of an input encoder

What comes out of an input encoder is a vector made up of 64 floats. A concatenation of \( 64 \times 5 = 320 \) values is passed to each neuron in the first dense layer of 256 neurons.

Internal structure of an input encoder

Internally, the input encoder is taking its input vector of 145 values to each of the 128 neurons. There is another layer within the input encoder which has 64 neurons. This acts as the output head. The outputs of up to 5 of these input encoders, padded with zeros if there have been fewer than 5 previous turns in the Wordle game, make up 320 values to be passed into the main body of the Neural Net.

Neural Net Layers

After the input encoders have been used to create a concatenation of 320 values, this is passed to each neuron in the first hidden layer. They will compute something like this:

$$ y_j = f\left(\sum_{i=1}^{320} w_{j i} \, x_i + b_j\right) $$where:

- \( x_i \) are the 320 input values

- \( w_{j i} \) are the weights for neuron \( j \)

- \( b_j \) is the bias term

- \( f \) is the activation function

The layers of the Neural Net, then, are as follows:

- 256-neuron layer that receives the input from the input encoders, cat’ed together

- 128-neuron hidden layer

- 64-neuron output layer

This output layer is then used with the model’s output embedding, which then returns a 5-letter word.

Output Embedding

See the post on output embedding for the Neuroevolutionary Wordle model for more information.

Summary

This is a provisional design, and liable to change as the project progresses. The Neural Net uses input encoders, a three-layer network processing the data from the input encoders, and an output embedding system that turns the output into a playable guess in a Wordle game.